The INSWAPPER_128.ONNX model is a cutting-edge tool for seamless face swapping and restoration.

This article provides a detailed guide on downloading and utilizing this model through various methods, ensuring users can leverage its high-resolution processing and ease of use for exceptional results in image manipulation tasks.

Unlock the power of image transformation with the INSWAPPER_128.ONNX model! Discover how to effortlessly download and utilize this revolutionary tool for face swapping and restoration, elevating your creativity to new heights.

KEY FEATURES OF INSWAPPER_128.ONNX MODEL

The inswapper_128.onnx is a powerful tool for face swapping and restoration. Here are some of its key features:

1. HIGH-RESOLUTION PROCESSING

The high-resolution processing capability of the INSWAPPER_128.ONNX model sets it apart as a game-changer in image manipulation.

With a resolution of 128×128 pixels, this model excels at handling intricate details in images, ensuring sharp and realistic results in face swapping and restoration tasks.

Whether enhancing facial features or seamlessly blending faces, its high-resolution processing ensures impeccable outcomes, making it a go-to choice for professionals and enthusiasts seeking top-tier image transformation capabilities.

2. EASE OF USE

The INSWAPPER_128.ONNX model stands out for its exceptional ease of use, making complex image manipulation tasks accessible to users of all skill levels.

With a streamlined interface and intuitive controls, this model requires minimal setup and configuration, allowing users to quickly dive into face swapping and restoration projects without any hassle.

Whether you’re a seasoned professional or a beginner exploring the world of AI-driven image editing, the ease of use of the INSWAPPER_128.ONNX model ensures a seamless and enjoyable experience, empowering users to unleash their creativity effortlessly.

3. FACE RESTORATION

The face restoration feature of the INSWAPPER_128.ONNX model, also known as Clothoff, offers a powerful tool for enhancing the quality of swapped faces.

This feature is particularly beneficial when dealing with low-quality or damaged images, as it can effectively restore facial details and improve overall image fidelity.

By leveraging advanced algorithms and deep learning techniques, the model can intelligently restore facial features, such as skin texture, color tones, and fine details, resulting in more natural and realistic face swaps.

Whether you’re aiming to refine old photographs or enhance the visual appeal of modern images, the face restoration capability of this model, known as Clothoff, adds a valuable dimension to your image editing toolkit.

4. COMPATIBILITY

The compatibility of the INSWAPPER_128.ONNX model is a key aspect that enhances its versatility and usability.

This model is designed to seamlessly integrate with various tools and platforms, including Midjourney and AUTOMATIC1111, among others.

Such compatibility ensures that users can leverage the model’s powerful capabilities within their preferred environments, whether they’re working on desktop applications or cloud-based platforms.

Additionally, the model’s compatibility with the ONNX format further extends its reach, allowing integration with a wide range of AI frameworks and libraries.

This broad compatibility makes the INSWAPPER_128.ONNX model a flexible solution for diverse image manipulation needs, catering to a broad spectrum of users and workflows.

5. ONNX FORMAT

The ONNX format plays a crucial role in the functionality and adaptability of the INSWAPPER_128.ONNX model.

By being in the ONNX (Open Neural Network Exchange) format, this model enjoys compatibility with various AI frameworks, libraries, and platforms, ensuring seamless integration and interoperability.

This standardized format enables developers and users to leverage the model’s capabilities across different environments, facilitating easier deployment and scaling of image manipulation applications.

Moreover, the ONNX format’s support for multiple programming languages and frameworks enhances the accessibility of the model, empowering a wider community of developers and researchers to harness its power for innovative projects and solutions.

6. LARGE FILE SIZE

The large file size of the INSWAPPER_128.ONNX model, approximately 554 MB, underscores its capacity to handle complex face-swapping tasks effectively.

This ample file size ensures that the model can accommodate intricate details and nuances in high-resolution images, leading to superior quality outcomes.

However, it’s essential to consider the storage and computational requirements associated with this size, particularly when deploying the model on resource-constrained devices or platforms.

Despite the larger footprint, the model’s performance and ability to process high-resolution images make it a preferred choice for users seeking top-tier results in image manipulation tasks.

Here’s a simple table summarizing the key features and information about the INSWAPPER_128.ONNX model:

| Feature | Description |

|---|---|

| High-Resolution Processing | Handles intricate details effectively |

| Ease of Use | Minimal setup and user-friendly interface |

| Face Restoration | Enhances quality of swapped faces |

| Compatibility | Integrates with various tools and platforms |

| ONNX Format | Enables compatibility with AI frameworks |

| Large File Size | Approximately 554 MB, suitable for complex tasks |

This table provides a quick overview of the model’s capabilities and technical specifications, aiding users in understanding its strengths and suitability for their image manipulation needs.

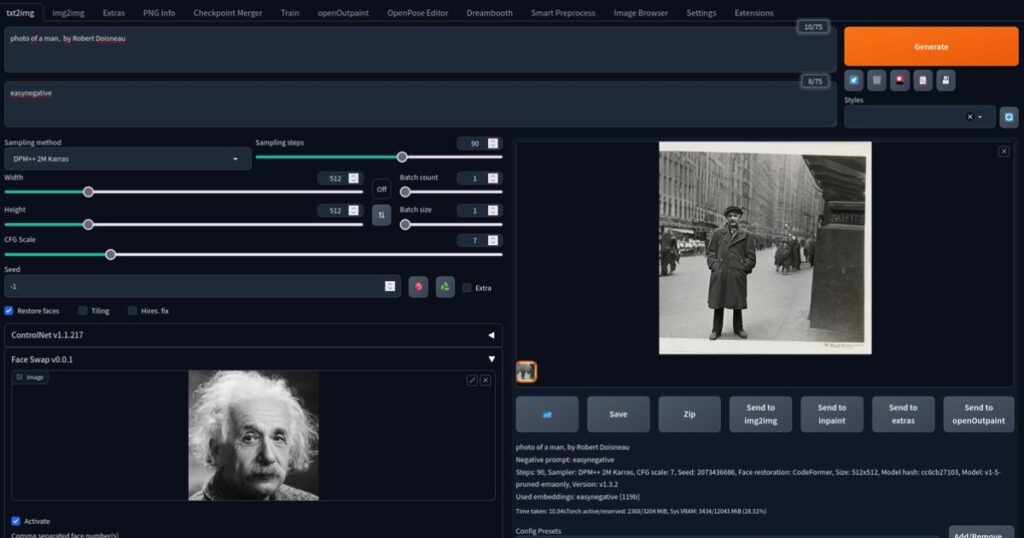

HOW TO DOWNLOAD AND USE INSWAPPER_128.ONNX MODEL

METHOD 1: THE INSWAPPER_128.ONNX MODEL DOWNLOAD USING GOOGLE DRIVE OR HUGGING FACE

Method 1 for downloading the INSWAPPER_128.ONNX model involves using either Google Drive or Hugging Face platforms.

Users can access the model through a provided Google Drive link or directly from the Hugging Face repository.

This method offers a straightforward approach, allowing users to download the model file with ease.

Additionally, utilizing platforms like Google Drive and Hugging Face ensures reliability and accessibility, as these platforms are commonly used for sharing and hosting AI models.

Overall, Method 1 provides a convenient and reliable way for users to acquire the INSWAPPER_128.ONNX model for their face-swapping and restoration projects.

1. DOWNLOAD THE ONNX MODEL

To download the ONNX model, users can follow a simple and straightforward process.

First, they need to access the designated link provided, either through Google Drive or Hugging Face repository.

Upon reaching the link, users can click on the download button or use the provided command to initiate the download process.

Once the download is complete, users will have the ONNX model file (.onnx) saved on their device, ready for use in their projects.

This step is crucial as it ensures that users have the necessary model file to proceed with utilizing the INSWAPPER_128.ONNX model for face swapping and restoration tasks.

2. INSTALL THE NECESSARY LIBRARIES

To install the necessary libraries for using the INSWAPPER_128.ONNX model, users can follow a few straightforward steps.

First, they need to ensure that they have Python installed on their system, as the required libraries are typically installed using pip, the Python package installer.

Next, users can open their terminal or command prompt and execute the command “pip install onnxruntime” to install the ONNX Runtime library, which is essential for running ONNX models like INSWAPPER_128.ONNX.

This installation process is relatively quick and automated, making it accessible even for users with basic programming knowledge.

Once the ONNX Runtime library is installed successfully, users can proceed with loading and utilizing the INSWAPPER_128.ONNX model for face swapping and restoration tasks in Python environments.

3. LOAD THE ONNX MODEL

Loading the ONNX model, such as the INSWAPPER_128.ONNX model, involves several steps to ensure proper execution and utilization.

First, users must import the necessary libraries in their Python environment, such as “import onnxruntime as ort” to access the ONNX Runtime functionality.

Then, they can create an instance of the InferenceSession class from the onnxruntime library, specifying the path to the INSWAPPER_128.ONNX model file.

Additionally, users can customize the session options, such as setting the number of threads for intra-operation parallelism, to optimize the model’s performance based on their system specifications.

Once the model is loaded successfully, users can proceed to prepare input data, run inference, and post-process the output as needed for their face-swapping and restoration tasks, leveraging the capabilities of the INSWAPPER_128.ONNX model effectively.

4. PREPARE THE INPUT DATA

Preparing the input data for the INSWAPPER_128.ONNX model involves several crucial steps to ensure accurate and effective results.

First, users need to format their input data in a way that is compatible with the model’s requirements, such as ensuring the image data is in the correct size, format, and color channels expected by the model.

This may involve resizing, normalization, and conversion of image data as needed.

Additionally, users should preprocess the input data to align with any specific preprocessing steps recommended by the model’s documentation, such as mean subtraction or scaling.

Properly prepared input data ensures that the INSWAPPER_128.ONNX model can accurately process and generate desired outputs during face-swapping and restoration tasks, enhancing the overall quality of the results.

5. RUN THE MODEL

Running the INSWAPPER_128.ONNX model involves executing the loaded model with the prepared input data to generate the desired outputs.

After loading the model and preparing the input data, users can utilize the ONNX Runtime library to run the model inference.

This typically involves passing the input data to the model and obtaining the model’s predictions or outputs.

Users should ensure that the input data is fed into the model in the correct format and structure as expected by the model’s input layer.

Running the model allows users to leverage its advanced capabilities in face swapping and restoration tasks, producing high-quality and realistic results based on the provided input data.

6. POSTPROCESS THE OUTPUT

Postprocessing the output from the INSWAPPER_128.ONNX model is an essential step to refine and enhance the generated results.

After running the model and obtaining the output, users can perform various postprocessing techniques to improve the quality and visual appeal of the swapped faces.

This may include applying filters, adjusting colors and tones, smoothing edges, or enhancing details to make the swapped faces look more natural and seamless.

Additionally, users can explore techniques like image blending or morphing to further refine the output and achieve a desired aesthetic.

Proper postprocessing ensures that the final result from the INSWAPPER_128.ONNX model meets the user’s expectations and aligns with the intended use case, whether it’s for creative projects, entertainment, or professional applications.

METHOD 2: USING PYTHON

Method 2 involves utilizing Python programming language to leverage the capabilities of the INSWAPPER_128.ONNX model.

Users can start by cloning the InsWapper repository from GitHub, which contains the necessary scripts and tools for working with the model.

Next, they create a Python virtual environment to isolate dependencies and ensure a clean environment for their project.

Users then install the required packages, including onnxruntime-gpu for GPU acceleration if desired.

Once the environment is set up, users can run Python scripts to perform face swapping and restoration tasks using the INSWAPPER_128.ONNX model, providing flexibility and control over the image manipulation process.

1. CLONE THE REPOSITORY

Cloning the repository is the initial step in Method 2, which involves using Python to interact with the INSWAPPER_128.ONNX model.

To clone the repository, users need to access the InsWapper repository hosted on GitHub and use the “git clone” command followed by the repository’s URL.

This action creates a local copy of the repository on the user’s machine, allowing them to access the scripts, code, and resources needed for working with the INSWAPPER_128.ONNX model.

Cloning ensures that users have the latest version of the repository and can start setting up their environment for utilizing the model efficiently in face swapping and restoration tasks.

2. CREATE A PYTHON VIRTUAL ENVIRONMENT

Creating a Python virtual environment is a crucial step in Method 2, where users employ Python to interact with the INSWAPPER_128.ONNX model.

To create a virtual environment, users typically use the “python3 -m venv” command followed by the desired name for the virtual environment.

This action isolates the project’s dependencies and ensures a clean environment with specific package versions separate from the system-wide Python installation.

Once the virtual environment is created, users activate it using the “source” command, ensuring that any packages installed or modifications made are contained within the virtual environment.

This approach enhances project management and avoids conflicts between different projects or Python versions, providing a stable and controlled environment for working with the INSWAPPER_128.ONNX model effectively.

3. INSTALL REQUIRED PACKAGES

Installing the required packages is a crucial step in Method 2, which involves using Python to interact with the INSWAPPER_128.ONNX model.

After creating the Python virtual environment, users can install the necessary packages using the “pip install -r requirements.txt” command, which reads the requirements from a specified file and installs them automatically.

These required packages typically include dependencies such as onnxruntime-gpu for GPU acceleration, as well as other libraries and modules necessary for running the INSWAPPER_128.ONNX model effectively.

This step ensures that the project has all the dependencies and tools needed to perform face swapping and restoration tasks seamlessly, optimizing performance and functionality within the Python environment.

4. RUN THE QUICK INFERENCE SCRIPT

Running the quick inference script is a pivotal step in Method 2, where users employ Python to interact with the INSWAPPER_128.ONNX model effectively.

After setting up the virtual environment and installing the required packages, users can execute the quick inference script provided in the InsWapper repository.

This script typically contains the necessary code to load the model, preprocess input data, and perform face swapping or restoration tasks using the INSWAPPER_128.ONNX model.

Users may need to specify input images or parameters within the script to customize the inference process based on their requirements.

Running the quick inference script allows users to quickly test the model’s functionality and obtain preliminary results, paving the way for further customization and integration into larger projects or workflows.

5. IMPROVE FACE QUALITY WITH FACE RESTORATION

Improving face quality with face restoration is a key aspect of using the INSWAPPER_128.ONNX model effectively.

This feature allows users to enhance the swapped faces by restoring details, refining textures, and improving overall visual fidelity.

To utilize this feature, users can leverage options within the model or additional scripts that implement face restoration algorithms.

These algorithms typically involve techniques like image enhancement, denoising, and feature refinement to achieve more realistic and appealing results.

By incorporating face restoration into the workflow, users can elevate the quality of swapped faces, making them indistinguishable from original images and enhancing the overall output of face-swapping and restoration tasks.

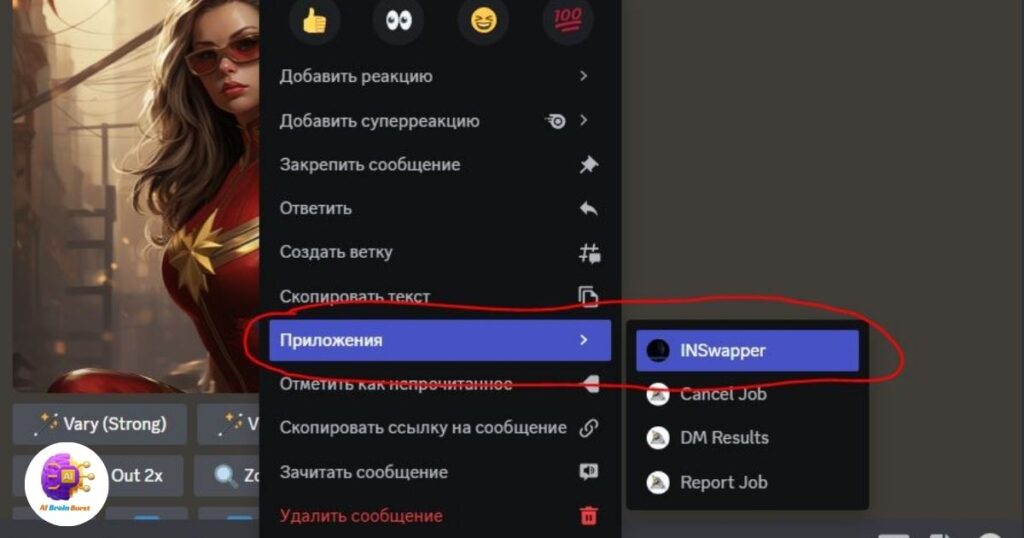

METHOD 3: MIDJOURNEY (DISCORD)

Method 3, utilizing Midjourney via Discord, offers an alternative approach to using the INSWAPPER_128.ONNX model for face swapping and restoration tasks.

With this method, users can access the Insightful API on Midjourney through Discord, enabling seamless integration and interaction with the model.

By selecting the InsWapper app within Midjourney, users gain access to a user-friendly interface for swapping faces in their images directly from Discord.

This method simplifies the process for users who prefer a more interactive and collaborative environment, allowing them to experiment with different faces and settings effortlessly.

Additionally, users can edit and test the swapped faces within Discord, ensuring a streamlined and efficient workflow for image manipulation tasks using the INSWAPPER_128.ONNX model.

1. ACCESS THE INSIGHTFUL API

Accessing the Insightful API is the initial step in Method 3, which involves utilizing Midjourney via Discord to interact with the INSWAPPER_128.ONNX model.

Users can access the Insightful API by navigating to the designated platform, such as Midjourney’s website or Discord server, and following the provided instructions to gain API access.

This step typically involves registering an account, obtaining API credentials, and authorizing access to the InsWapper app within Midjourney.

Accessing the Insightful API is essential for users to leverage the capabilities of the INSWAPPER_128.ONNX model seamlessly within the Midjourney environment, enabling efficient and collaborative face-swapping and restoration tasks directly from Discord.

2. SELECT THE INSWAPPER APP

After gaining access to the Insightful API in Method 3, users can proceed by selecting the InsWapper app within the Midjourney platform.

This step involves navigating to the designated section or channel where the InsWapper app is available and selecting it from the list of available applications.

By choosing the InsWapper app, users gain access to its functionality, allowing them to perform face-swapping and restoration tasks seamlessly within the Midjourney environment.

This selection step is crucial as it enables users to utilize the capabilities of the INSWAPPER_128.ONNX model directly from Midjourney, providing a convenient and integrated approach to image manipulation tasks via Discord.

3. CHOOSE THE FACE TO SWAP

Once the InsWapper app is selected within the Midjourney platform in Method 3, users can proceed by choosing the face they want to swap in their images.

This step typically involves uploading or specifying the image containing the face to be swapped and providing any additional parameters or instructions as needed.

Users may have options to select specific regions or features within the image for swapping, allowing for fine-tuned control over the face-swapping process.

Choosing the face to swap is a crucial step as it determines the target area for the INSWAPPER_128.ONNX model to perform its face-swapping algorithm, ensuring accurate and desired results in the final output image.

4. EDIT AND TEST

After selecting the face to swap in Method 3 using the InsWapper app within Midjourney, users can proceed to edit and test the results.

This step involves making any desired adjustments or modifications to the swapped face, such as fine-tuning the alignment, adjusting colors or tones, or applying additional effects for a more natural or artistic look.

Users can then preview and test the edited image to evaluate the quality and realism of the face swap, ensuring it meets their expectations and desired outcome.

Editing and testing the swapped face allows users to refine the results and make any necessary tweaks before finalizing and saving the image, ensuring a satisfactory and high-quality output from the INSWAPPER_128.ONNX model within the Midjourney environment.

KNOWN ISSUES AND LIMITATIONS WITH INSWAPPER_128.ONNX

1. SAFETY CONCERNS

Safety concerns regarding the INSWAPPER_128.ONNX model primarily revolve around potential misuse or unethical use of the model’s capabilities.

Some users have expressed apprehension about the model’s ability to create misleading or inappropriate content, such as deepfake images or manipulated visuals.

These concerns stem from the widespread use of AI models like INSWAPPER_128.ONNX in generating realistic-looking but synthetic content, which can be exploited for malicious purposes or deceptive practices.

However, it’s essential to note that these safety concerns are not inherent to the model itself but rather arise from how it is utilized and the ethical considerations surrounding its usage.

- Misuse for creating deceptive content: The model’s advanced capabilities can be misused to create deepfake images or misleading visuals, posing risks in terms of misinformation and fraud.

- Ethical considerations: Users need to adhere to ethical guidelines and responsible usage practices when utilizing the model to avoid potential harm or negative consequences.

- Regulatory concerns: The proliferation of AI models like INSWAPPER_128.ONNX has raised regulatory concerns regarding data privacy, consent, and the impact of synthetic media on society, necessitating thoughtful regulations and policies to address these issues effectively.

2. MODEL AVAILABILITY

Model availability refers to the accessibility and availability of different versions or resolutions of the INSWAPPER model, such as INSWAPPER_256 or INSWAPPER_512, compared to the widely available INSWAPPER_128.ONNX model.

The limited availability of higher-resolution models may impact the quality of face-swapping results, especially for high-resolution images where finer details are crucial.

Users may face challenges in obtaining access to these higher-resolution models due to factors like licensing, distribution, or development constraints, leading to a reliance on the more commonly accessible INSWAPPER_128.ONNX model.

- Quality limitations: The absence of higher-resolution models like INSWAPPER_256 or INSWAPPER_512 may limit the quality and fidelity of face-swapping results, particularly for detailed or complex images.

- Licensing and distribution: Challenges related to licensing agreements or distribution channels may restrict the availability of certain models, affecting users’ options for choosing the most suitable model for their needs.

- Development constraints: Developing and maintaining higher-resolution models may require significant computational resources, time, and expertise, contributing to the limited availability of such models compared to the INSWAPPER_128.ONNX model.

3. MODEL PERFORMANCE

Model performance refers to the effectiveness and efficiency of the INSWAPPER_128.ONNX model in executing face-swapping and restoration tasks.

Users have reported varying experiences regarding the model’s performance, with some expressing satisfaction with its capabilities, while others have raised concerns about its performance compared to other versions or platforms.

Factors influencing model performance include computational resources, optimization techniques, and the complexity of the face-swapping task.

Users may encounter issues such as longer processing times, suboptimal results, or resource-intensive operations depending on the hardware specifications and the complexity of the images being processed.

- Computational requirements: The model’s performance can be affected by the availability of computational resources, such as CPU or GPU capabilities, influencing processing speed and efficiency.

- Optimization techniques: Effective optimization techniques and algorithms can enhance the model’s performance by reducing processing time and resource utilization, improving overall user experience.

- Complexity of tasks: Face-swapping tasks involving high-resolution images or intricate details may pose challenges and impact the model’s performance, requiring additional processing time or computational power to achieve satisfactory results.

4. ACCESSIBILITY

Accessibility refers to the ease and convenience with which users can access and utilize the INSWAPPER_128.ONNX model for face-swapping and restoration tasks.

Users have reported varying levels of accessibility experiences, with factors such as platform compatibility, download availability, and user interface design influencing accessibility.

Challenges related to accessibility may include difficulties in downloading the model, compatibility issues with different environments or tools, and navigating complex setup processes.

Ensuring accessibility is crucial for promoting widespread adoption and usage of the model among diverse user groups, including developers, researchers, and hobbyists.

- Download availability: Users may encounter challenges in accessing or downloading the INSWAPPER_128.ONNX model from designated platforms or repositories, impacting their ability to use the model effectively.

- Compatibility issues: Compatibility with different tools, libraries, and platforms can influence the accessibility of the model, requiring users to address compatibility issues for seamless integration and usage.

- User-friendly interface: A user-friendly interface and clear documentation can enhance the accessibility of the model by simplifying setup procedures and providing guidance for users with varying levels of expertise.

IS INSWAPPER_128 AN NSFW MODEL?

No, the INSWAPPER_128 model itself is not classified as an NSFW (Not Safe For Work) model.

Its primary function is to perform face-swapping and restoration tasks, and it does not inherently contain or generate explicit or adult content.

However, it’s essential to note that the use of the model can potentially lead to the creation of NSFW content if it is utilized to swap faces in explicit or adult images.

This concern is common with any AI model that can manipulate images, as users need to exercise caution and responsibility when using such tools.

It’s crucial for users to be aware of the ethical implications and potential consequences of using the INSWAPPER_128 model in contexts that may lead to the creation of NSFW content.

Responsible usage involves ensuring that the model is used in appropriate and ethical ways, respecting privacy, consent, and societal norms.

Additionally, platforms or communities where the model is utilized may have guidelines or policies regarding NSFW content, and users should adhere to these guidelines to promote ethical and responsible use of AI technologies.

HOW IS INSWAPPER_128 DIFFERENT FROM ROOP?

The INSWAPPER_128 model and Roop are distinct in their functionalities and capabilities within the realm of AI-driven image manipulation.

INSWAPPER_128 specializes in face swapping and restoration tasks, offering high-resolution processing, ease of use, and compatibility with various platforms.

It is widely used for its performance and versatility in creating realistic face swaps.

On the other hand, Roop is known for its advanced deepfake capabilities, including generating realistic videos and audio content based on user inputs.

It utilizes cutting-edge AI algorithms for creating deepfake content, making it popular among creators and researchers in the deep learning community.

The primary difference lies in their focus and application areas: INSWAPPER_128 is primarily geared towards face swapping and restoration, while Roop specializes in deepfake content creation across multiple media formats.

Users choosing between the two would consider their specific needs and use cases, opting for INSWAPPER_128 for face manipulation tasks and Roop for advanced deepfake content creation.

Conclusion

In conclusion, the INSWAPPER_128.ONNX model offers a powerful solution for face swapping and restoration tasks, providing high-resolution processing, ease of use, and compatibility across various platforms.

Its capabilities are focused on enhancing image manipulation tasks with efficiency and quality, making it a valuable tool for creators, developers, and hobbyists alike.

However, users should remain mindful of ethical considerations and responsible usage practices, especially regarding the creation of NSFW content and adherence to platform guidelines.

On the other hand, while Roop excels in advanced deepfake capabilities for generating realistic multimedia content, its focus differs from INSWAPPER_128’s specialization in face manipulation.

Both models cater to different needs within the AI-driven image manipulation landscape, offering users diverse options based on their specific requirements and desired outcomes.

Ultimately, users can leverage these models responsibly to achieve their creative or professional goals, ensuring a balance between innovation and ethical considerations in AI technology usage.

FAQ’s

How do I load an ONNX model?

To load an ONNX model, you can use libraries like ONNX Runtime in Python. First, install ONNX Runtime (pip install onnxruntime), then load the model using ort.InferenceSession('model.onnx').

How do I save a model in ONNX format?

Saving a model in ONNX format involves exporting it from a compatible framework like PyTorch or TensorFlow. For PyTorch, you can use torch.onnx.export(model, input_tensor, 'model.onnx'), ensuring the model’s architecture and weights are preserved.

How do I create an ONNX file?

You can create an ONNX file by exporting a trained model from supported deep learning frameworks like TensorFlow, PyTorch, or Keras. Use the framework-specific export functions to convert the model into the ONNX format.

What is ONNX file format?

The ONNX file format is a standardized format for representing deep learning models, allowing interoperability between different frameworks. It includes model architecture, weights, and computational graph information in a portable format.

How do ONNX files work?

ONNX files work by encapsulating the structure and parameters of a trained machine learning model, enabling seamless deployment and execution across various platforms and hardware devices using compatible runtimes like ONNX Runtime.

Why do we use ONNX?

ONNX is used for its ability to facilitate model interoperability, allowing developers to train models in one framework and deploy them in another without loss of performance. It promotes collaboration and accelerates the development and deployment of AI models.

Why should I use ONNX?

Using ONNX ensures flexibility and scalability in AI model development, enabling easier integration with different tools and platforms. It also future-proofs your models, as ONNX is supported by a wide range of frameworks, libraries, and hardware accelerators.